At my last year at RUG I landed a research thesis in collaboration with TNO, where I studied the intersection point of software engineering, artificial intelligence and sustainability. The question was simple: Can language models help us rewrite code to reduce its energy consumption?

During my initial reading I saw that energy consumption of software systems is mostly looked at from the hardware perspective. A lot has been done to improve the efficiency of processors, memory and other components. I however wanted to study efficiency of code itself.

I experimented by running code samples multiple times on TNO’s bare-metal Linux servers, measuring the energy consumption from counters present on the AMD processors, while a synthetic load was running to eliminate measured energy consumption spikes.

The Greenify Pipeline

The entire experiment was created as a pipeline in Python which processed around 1,700 functions from the Mercury, HumanEval and MBPP datasets.

The input code samples were then profiled using pyJoules, which uses Intel RAPL counters, capturing the baseline energy consumption by running test suits on repeat for one second per code sample.

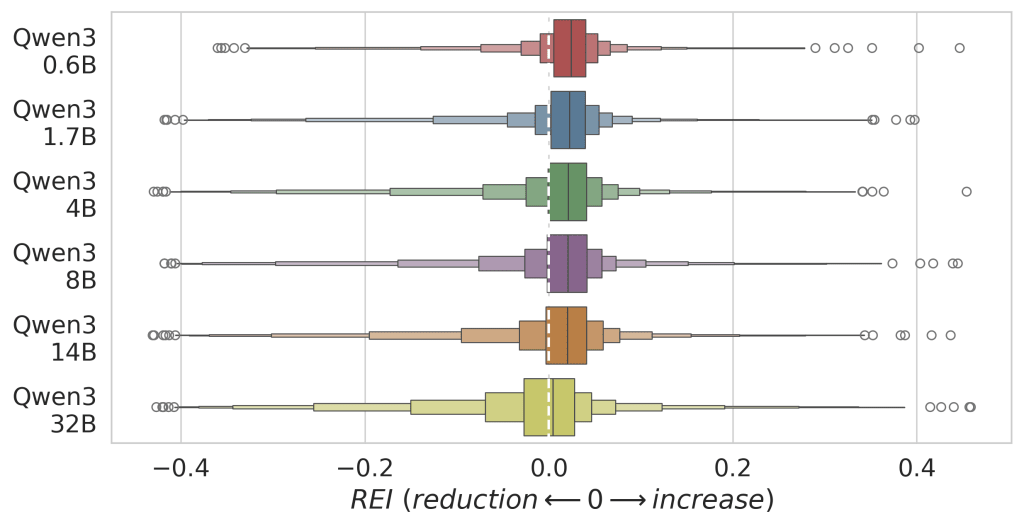

Then it was off to the Qwen3 model family (ranging from 0.6B to 32B parameters) to rewrite the code specifically for energy efficiency, profiling these greenified samples the same way as well as verifying that the new code is functionally identical using tests.

Overall, over 13,000 greenified variations were generated and profiled to answer our question via statistics.

Larger Models Tend to Perform Better

The most intuitive finding was that large models perform better than smaller models, but the relationship is logarithmic meaning that with larger and larger models we get less and less additional benefit. The effect was also more pronounced when looking into functional correctness as compared to energy efficiency improvement.

You can see from the plot below that only the Qwen3 32B model stands out as moving the relative energy impact distribution towards the reduction of consumed energy. In this graph negative values mean reduction (-0.2 means 20% reduction) and positive mean increase in energy consumption. Zero, of course, represents no change in energy consumption between baseline and greenified code samples.

Garbage In, Average Out

However, perhaps the most critical finding was that the input code itself is the primary predictor of potential energy savings.

If the baseline code is already efficient enough, the language model cannot improve efficiency further. The models stand out in exploiting obvious inefficiencies rather than in finding novel optimizations.

You may be asking yourself what is “efficient enough”, and that is a great question. From the point of language models it seems to be anything that the model has learned as more efficient during training. Alternatively, if your code is below average efficiency then models will help you improve it up to that plateau of average and no further.

This is a great sign for automatization of greenifying code. However, it also shifts the task from finding better models to improved detection of inefficient code, which is what I asked ourselves next.

Predicting Energy Change

How easy is it to predict if our greenifying process will actually reduce the energy consumption of code? To make our process sustainable, we need to know before we greenify if a piece of code is inefficient enough to be worth processing. This is the question I wanted to answer using machine learning algorithms.

I trained Scikit-learn models on static code analysis metrics like Cyclomatic Complexity and Halstead Effort to predict two outcomes:

Will the code functionality break? Here our models could predict the outcome with high accuracy, with an score of . The score here evaluates how effective the model is at identifying samples where tests will pass after greenifying, as well as how good it is at identifying samples which will fail the tests after greenifying. However, in our second question: “Will the generated code reduce energy consumption?” our models only scored score of , which is just a hair better than guessing randomly ().

After further analysis my conclusion states that the static code analysis metrics which we used fail to capture the information necessary to distinguish the efficient code from the inefficient.

The Returns on the Energy Invested

Finally I must address the big elephant in the room. While larger models produce better code, the improvement for us comes at a hefty price: energy. Rewriting code with a 32B parameter model will consume significantly more power than using a smaller 7B model. Because of this I modeled the balance of the energy cost of code generation against the energy savings in running the greenified code, only for code variants where we reduced energy consumption via greenifying.

The results showed that while the largest model had the highest overall energy savings in greenified code, its “break-even point” (the number of times the code must run in order to pay back the generation cost) was the worst out of all other models. This meant that choosing a model is important event if we are guaranteed to always improve the energy efficiency of the input code sample.

Practically, this means that a model-selection optimization should also be a part of the greenifying process, creating a challenging balancing act of the entire process (and that is excluding model training costs):

- Greenified code is more likely to be functionally correct if we use a larger model, meaning we want to use the largest models possible

- Larger models generally use more energy, so we want to use smallest models possible

- Larger models tend to create larger energy savings than smaller models, but we also have to choose the model depending on how often the code we are greenifying will run because a large improvement is meaningless if the code does not get executed

Summary

Overall this work shows a path forward for Green AI: to move forward, we don’t need larger models. The results demonstrated that to make greenifying viable, we must focus on identifying inefficient code before optimizing it. Then we can ensure that the energy spent on generating code using the language models is paid back by the energy saved running it.

This project additionally delivers a validated dataset of energy measurements, now available to the research community. The measurement dataset is published on Zenodo and HuggingFace, containing paired energy measurements (before and after greenifying), which will hopefully help future research, and the paper can be downloaded from the RUG repository.